Power of A/B Testing

Beyond user feedback

Presented by Evelyn J. Boettcher, Founder of DiDacTex, LLC

Developers & Data Analytics

A / B Testing

A/B testing is a randomized controlled experiment done in production.

There are two tests: A and B, in which a single variable adjusted (B Test).

This variation, might affect a user’s behavior.

Goal: Increase End User’s Objective

- Business: Income increases >> Costs of change

- Health Care: Health increases >> Side effect

Weekly Planner Choices

A

B

So why do A/B Testing

- Why spend the resources to do tests

- Why risk angering your customers with changes?

- I got data miners, I do not need tests!

- I am a developer, I don’t need to know the business side

Life is complicated.

Domain knowledge only gets you so far!

Subject-matter experts (SME) from Microsoft, Netflixs etc, find when they implement changes that only a small fraction of their ideas have the planned outcome (Sweet 2022).

Even though we are isolating a single variable, that variable interacts with a million other variables.

You simply can not model everything or know everything.

Any change can hurt.

- Even tested changes

- Though less likely!

- Even tested changes

Change can help

- “If you’re not growing,

you’re die-ing”

- “If you’re not growing,

Gedanken:

Trader Janes has a New Pizza

Say there is a grocer called Trader Janes and it wants to add a new pizza to its line up.

However, they need to keep the same number of types it sells a constant. Freezer only holds N pizzas.

They will have to remove one pizza from their lineup to add the new item.

Typical Work flow

- Marketing asks a Data Miner to rank popularity of pizza.

- The Data Miner finds the pizza that sells the least.

- Stores remove that pizza.

- Stores add new pizza.

New Pizza added!!!

Unfortunately:

A week later sales go down.

What happened?

- Don’t know, because the store implemented many changes that week!

- It’s the week after Thanksgiving and sales always go down that week.

- Turns out there was a small group of heavy spenders that love this pizza.

A / B Testing help predict what changes will increase the bottom line.

A / B Testing Limitations

A/B Testing is used to clarify a vision, but does not create vision.

For example, an ophthalmologist quickly gives you a set of two choices; 1 or 2 (2 or 3) that lead to sharpen vision. Their test, like A/B, can not give you vision.

Though without a clarity, a vision has serious limitation.

A/B Testing and DevOps

The Three Ways for DevOps

The First Way: Principles of Flow

- Making work “visible” by defining a work flow

The Second Way: Principles of Feedback

- Have fast and constant feedback cycles throughout all stages of a development

- Don’t throw it over the wall

Third Way: Principles of Continuous Learning

- Create a culture of continual learning and experimentation

A/B Testing and the Three ways

A/B Testing is an extension of the

second and third ways.

Feedback will be the results of the A/B Testing.

However

Experimentation happens in production!

A/B Tests

The good, the bad and the ugly

Rewards

- Increase companies goals: (make more successful):

- Business: Profits

- Healthcare: Health

- Defence: Situational Awareness

Risks

- Test cost time and money

- Don’t know what percent of risk is acceptable

- Medical and Defence will have higher threshold of risk

- Upset customers

- Change can make things worse

Mitigation

- Have the smallest test possible

- 5% False Positive

- 20% False Negative

- Typical of non life critical changes

- Minimize number of samples

Reducing Costs

Minimal Viable Product

Need to create the smallest, fastest A/B Test that is statistically meaningful.

How do you minimize the number of samples (N)?

Want an N samples that show a 5% false positive and 20% false negative.

\[ N = ? \]

Use Statistics

Defines minimum number of samples (N) as:

\[ N > 2.48 \left( \frac{\sigma_\Delta}{\Delta} \right)^2 \]

- \(\Delta\): How much of a difference is needed to make the change

- It cost money to make a change

- Increase to bottom line needs to be significant, to accept risk

- Example: Trader Jane’s Pizza needs sales to increase by 3%

- \(\sigma_\Delta\): estimated by business historical data

- \(\sigma_\Delta\) = \(~\sqrt{2 \sigma_{log}^2}\)

- \(\sigma_{log}\): How much does sales fluctuate over a given time period.

- \(\sigma_\Delta\) = \(~\sqrt{2 \sigma_{log}^2}\)

Important

Unless there is a clear, measurable advantage, no change should occur.

There is no guarantee that change will be effective.

Bias and Harm

In addition, our testing and product should do no harm.

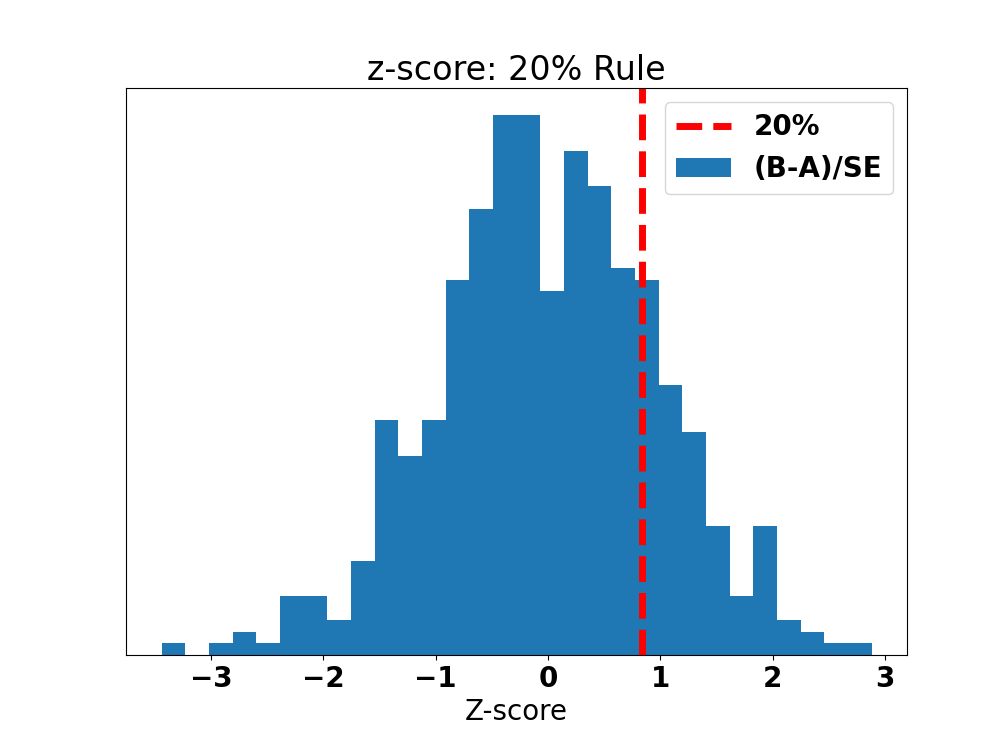

Where does 2.48 comes from?

\[ N > 2.48 \left( \frac{\sigma_\Delta}{\Delta} \right)^2 \]

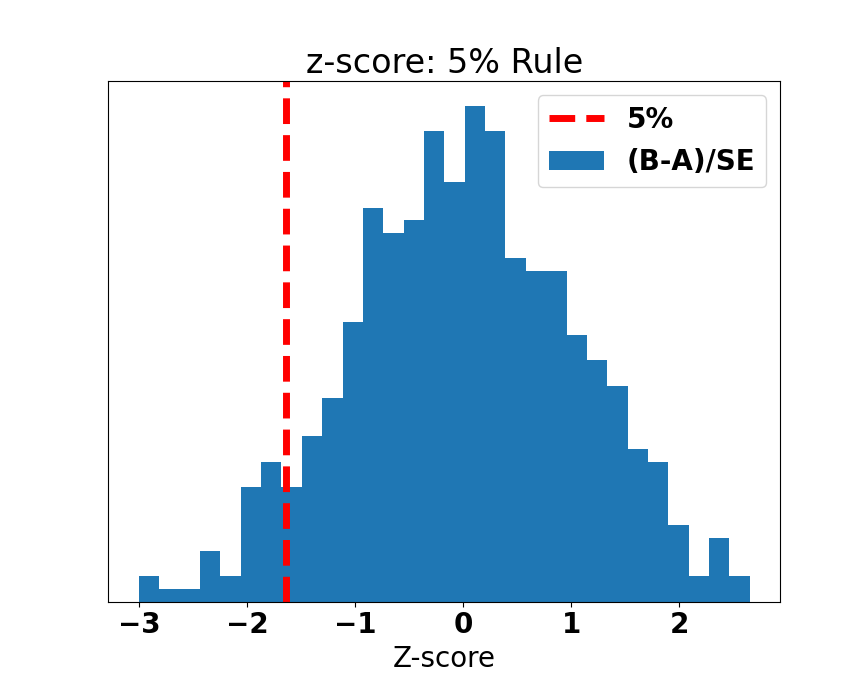

Rules of Thumb: 20 / 5 Rule

Assume there is no difference between A and B

\[ \Delta = 0 \\ \Delta = B - A \]

False positive

- A is better but, you implemented B

- incurs an explicit cost

False negative

- B is better but, you stuck with A

- incurs an implicit cost

\(\alpha\) == False Positive rate

- 5% => \(z_{score}\) = -1.64

You can assume B is better than A

\(\beta\)== False Negative rate

- 20% => \(z_{score}\) = 0.84

You can assume A is better than B

From Standard Normal Distribution

Mean is 0

Standard deviation: \(\sigma\)

\(z_{score}\) measures the distance between a point and the mean in units of \(\sigma\)

\(Z_{score}\) = -1.64 (5%)

\(Z_{score}\) = 0.84 (20%)

\[ 1.64 + 0.84 = 2.48 \]

- *Graph (Pierce, n.d.)

False Positive

\(\alpha\) == False Positive rate

- 5% => \(z_{score}\) = -1.64

Assume: B is better than A

False Negative

\(\beta\)== False Negative rate

- 20% => \(z_{score}\) = 0.84

Assume: A is better than B

Yeah,

we have our minimal viable test.

But we are not done yet.

One more thing to worry about

Can this (change/test) harm our customers?

Do no Harm: F potential

If we implement B, how F–ed up will that make the users?

If we tested B, how F–ed up will that make the users?

Social Sites

A/B Testing is being done on users without consent, knowledge and at scale (100K of users).

Group mindset has been around since the 1950’s. Current research shows that our minds physically change when we work together (Hughes, n.d.) socially.

So it is scary to read

- Facebook: Tested their algorithm to see if it really does radicalize people (Zadrozny, n.d.)

- LinkedIn: Tested on 20 million users to find out how links affect people’s career/jobs (Singer, n.d.)

- Facebook: Tested on 700,000 users to see if they can make them sad (Hern, n.d.)

Health Care

- Drug Companies: OxyContin (Detrano 2022)

- 1% addiction rate advertised (From non real world users)

- 10-30% addiction rate in real life

Remedy

Good news

There are already strong standard for testing on human subjects.

There is the IRB (Internal review board) preprocess.

It has required and continuous training and certification: CiTI training.

Bad news

Only required for companies receiving federal government funding: Universities, Air Force, Army etc.

Not required for companies that work with schools (state and local funding) and social media companies.

F potential

In light of this, I propose researchers use the F potential.

(Currently, not a real thing. Just something to think about.)

\(F_{upped}\) Potential

\[ F_{upped} = \begin{cases} \text{1,} &\quad\text{if seriously harmed}\\ \text{0.5,} &\quad\text{if slightly harmed} \\ \text{0,} &\quad\text{if not measurable}\\ \text{-0.5,} &\quad\text{becomes better} \\ \text{-1,} &\quad\text{becomes a lot better} \\ \end{cases} \]

If \(F_{upped}\) > 0, test should be a no-go.

If \(F_{upped}\) < 0, \(\Delta\) should be halved.

- e.g. \(\Delta\) is the amount of gain the company needs to make the change.

Example: Trader Jane’s Pizza needs sales to increase by 3% (\(\Delta\)).

If pizza made people better, then \(\Delta=1.5\%\).

One more thing to worry about

Seen and Unseen Bias

Biases can increase the F-potential.

Luckily, A/B Testing can help with both unseen and seen bias.

Example: Unseen Bias

I know of three small business that where started by young women in the Dayton area.

Their original logo design used beautifully detailed font.

Unfortunately, this detailed font would make it difficult for people like me (over 40) to read it.

They literally could see their logo.

However

Their logo was not readable to me when I drove by!

This is an unseen bias.

These women (All of whom where lovely and kind) did not know that they made a logo could not be read by me.

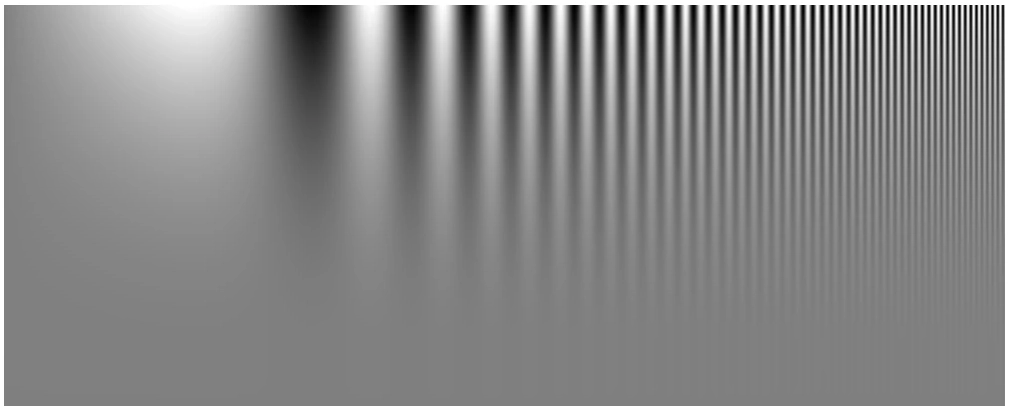

Contrast threshold function (CTF) of the Eye

The human eye’s ability to resolve a spatial frequency is dependent on contrast. This contrast threshold function will change with age.

- At ~40 your eye will need more contrast to see.

Logos evolve with Testing

Starbucks Logo Evolution

Starbucks Logo has evolved to reduce high spatial information.

- Old Logo: High frequency information

- thin lines

- New Logo: Medium frequency:

- medium width lines

(2022)

Change Risks

- Attract more old people, alienate young

- Loyal customers might not like change

Known Bias

A/B Testing to reduce Researcher’s Bias

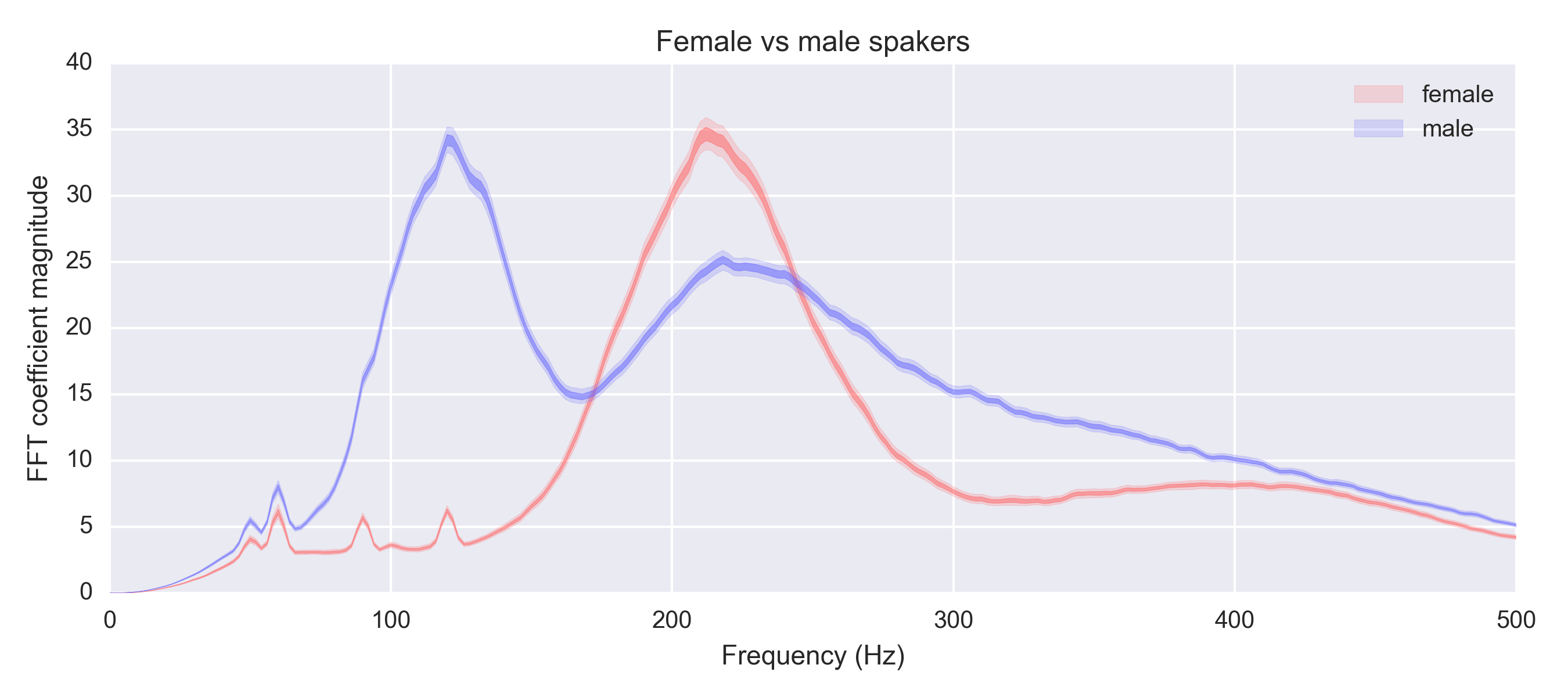

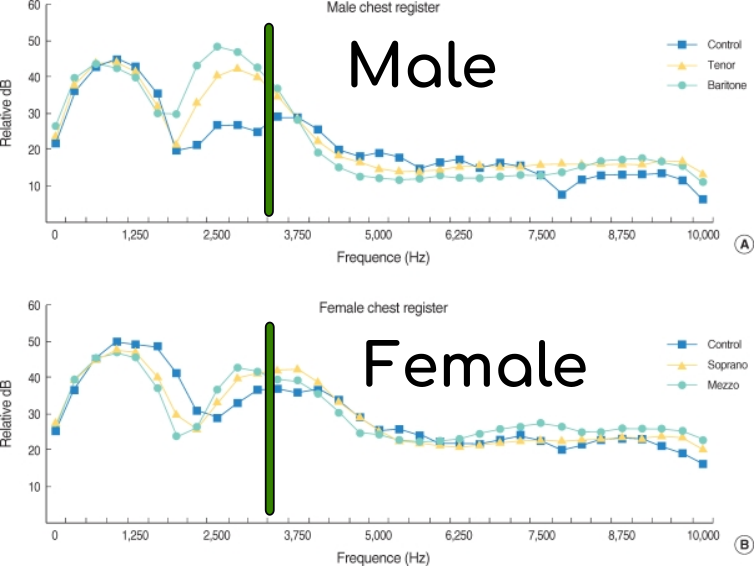

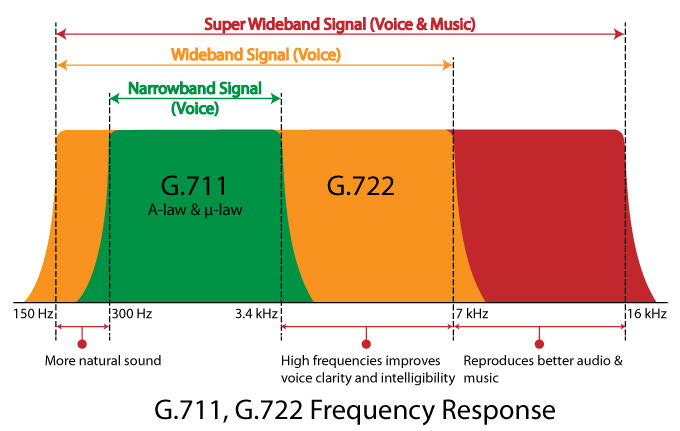

- Frequency range of Human Voice: 90Hz to 14,000Hz

- Frequency range for the Voice Spectrum over copper: 300Hz to 3,400Hz

- Men, Women and Children have different fundamental frequency

Shrill

Most consonants spoken are in the 400 to 4500Hz.

With Women having most of their consonants sounds showing up in the higher frequency’s.

Green Bar shows the cutoff for the voice spectrum

This caused women’s voices to sound shrill.

It also made it hard to understand what they said, since their voice was cut off.

Bias has caused women to change.

Margret Thatcher during her career changed her voice.

- Dropped her main vocal frequency roughly 60Hz! (Tallon, n.d.)

- Almost a 1/3!

Women’s voices have dropped on average over 23Hz from 1945 to 1993. (Cecilia Pemberton 1998)

Women’s voices have been becoming more manly.

Was the Voice Spectrum Biased: Yes

In 1927 a voice spectrum had to be defined.

J.C Steinberg (from AT&T) knew that the proposed voice frequency cut off women’s voices. He wrote a letter titled “Understanding Women”.

He states that men traditionally have an inability to understand women except when their tone is soft.

So, it is a “biological failing of women” (Tallon, n.d.) that we can’t understand them. \(\therefore\) The technology as is, is good.

Market prospective

In A/B Testing, we focus on the question does doing A or B make the company more successful.

- Women make up 1/2 the market.

When another company/technology comes along that cover’s women’s voices better, it is reasonable to assume that they will get that market share.

- Who has a landline?

My hero: HD-VOIP

Note: Narrow Band (free to use) VOIP is 300 to 3,400Hz.

Voice Bias in 21st century?

With more diverse workforce, a research(er) bias will go down.

- True

But, there is still unseen bias in voice in the 21st century!

Google and Apple had a hard time getting voice recognition to work for kids (Scanlon, n.d.)

Not only do kids speak at higher frequencies than women, they have different speaking patterns.

One can not simply take an adult’s voice and shift the frequency. So ML/AI have a hard time figuring what kids are saying.

Why kids are important

The market for voice recognition for kids looks to have a strong market growth.

Conclusion

A/B testing is a randomized controlled experiment done in production.

There are two tests: A and B, in which a single variable adjusted (B Test).

This variation, might affect a user’s behavior.

Your A/B Testing should:

- Make the company more successful.

- Follow some ethical guidelines, like the \(F_{upped}\) potential

- If \(F_{upped}\) > 0, test should be a no-go.

- If \(F_{upped}\) < 0, \(\Delta\) should be halved.

A/B Testing has Risk

No free lunch.

Even after testing, test results might not make the company more successful.

Thank you

Please check out Gem City Tech.

Gem City Tech

Gem City TECH’s mission is to grow the local industry and the community by providing a centralized destination for technical training, workshops and providing a forum for collaborating.

- Dayton Web Developers

- Dayton Dynamic Languages

- Dayton .net Developers

- Gem City Games Developments

- New to Tech

- Frameworks

- Machine Learning / Artificial Intelligence (ML/AI)

- Code for Dayton

Evelyn Boettcher ejb@DiDacTex.com

References

Taste of IT Conference